图片转提示词功能——分析图像并生成AI就绪的文本提示词的能力——已经从新奇功能变成了数十个创意工具的核心特性。设计应用用它进行风格匹配。浏览器扩展用它分析用户正在查看的图像。内容管道用它批量处理视觉资产。

如果你想将这种能力添加到自己的应用中,你有三个严肃的选择:Claude Vision(Anthropic)、GPT-4V(OpenAI)和Gemini Vision(Google)。本指南涵盖了每个的技术集成,包含实际可运行的代码,并提供能生成高质量结构化输出的提示词模板。

为什么开发者需要图片转提示词API

使用场景比你初次想象的要广泛得多:

- 创意工具:让用户上传灵感图像,获得适用于生成工具(Midjourney、Stable Diffusion、DALL·E)的AI就绪提示词

- 设计平台:分析上传的情绪板,提取风格指南

- 电商:从产品照片生成产品描述

- 内容审核管道:理解图像内容,进行分类和标记

- 无障碍工具:生成详细的alt文本和图像描述

- 游戏资产管道:在大型资产库中自动标记和描述资产

- 社交媒体工具:从上传图像生成标题和标签

- 照片组织应用:对图像集合进行语义搜索

三大主要API选择

| API | 提供商 | 图像输入 | 价格(大约) | 最适合 |

|---|---|---|---|---|

| Claude Vision (claude-haiku-4-5 / claude-sonnet) | Anthropic | Base64或URL | $0.25–$3 / 1M输入token | 详细提示词、指令跟随 |

| GPT-4V / GPT-4o | OpenAI | Base64或URL | $2.50–$10 / 1M输入token | 广泛知识、对话集成 |

| Gemini Vision (1.5 Flash/Pro) | Base64、URL或GCS | $0.075–$3.50 / 1M输入token | 高吞吐量、成本敏感工作负 |

对于图片转提示词具体来说,Claude Vision倾向于生成最结构化、最具艺术指导意识的输出。如果你已经在使用OpenAI生态系统,GPT-4o是最通用的选择。Gemini 1.5 Flash是高吞吐量管道中最经济的选项,适合成本比质量更重要的场景。

Claude Vision API:设置和认证

首先,安装Anthropic SDK:

pip install anthropic或者对于Node.js:

npm install @anthropic-ai/sdk从console.anthropic.com获取API密钥,并设置为环境变量:

export ANTHROPIC_API_KEY="sk-ant-api03-..."图像编码:Base64 vs URL

Claude Vision接受两种格式的图像:

Base64(推荐用于本地文件):将图像编码为Base64字符串,直接包含在API请求中。支持JPEG、PNG、GIF和WebP。每张图像最大5MB。

URL(推荐用于网络托管图像):传入公开可访问的图像URL。Claude会在服务器端获取图像。URL必须是公开可访问的(不需要认证)。

生成AI就绪提示词的系统提示词模板

系统提示词是你的实现中最重要的部分。弱的系统提示词会产生通用的图像描述。精心设计的系统提示词会产生结构化、可操作的提示词,能很好地与图像生成模型配合。

以下是能产生高质量Midjourney/Stable Diffusion兼容输出的系统提示词模板:

You are an expert AI art prompt engineer. When given an image, analyze it thoroughly and generate an optimized text prompt that would recreate a similar image using an AI image generation tool.

Your output must follow this structure:

PROMPT:

[Single paragraph, comma-separated descriptors. Include: subject description, environment/setting, lighting conditions, color palette, mood/atmosphere, art style, rendering quality. Be specific and concrete. 60-120 words.]

STYLE NOTES:

[2-3 sentences describing the visual style, medium, and any distinctive aesthetic characteristics]

NEGATIVE PROMPT:

[Comma-separated list of elements to exclude for best results]

MODEL RECOMMENDATION:

[One of: Midjourney, Stable Diffusion, DALL·E 3, Flux, Ideogram — based on the image style]

Do not include any preamble, explanation, or text outside this structure.Python实现:Claude Vision

import anthropic

import base64

from pathlib import Path

def image_to_prompt(image_path: str, target_model: str = "Midjourney") -> dict:

"""

Analyze an image and generate an AI art prompt.

Args:

image_path: Path to local image file

target_model: Target AI model ("Midjourney", "Stable Diffusion", etc.)

Returns:

dict with 'prompt', 'style_notes', 'negative_prompt', 'model_recommendation'

"""

client = anthropic.Anthropic()

# Read and encode image

image_data = Path(image_path).read_bytes()

base64_image = base64.standard_b64encode(image_data).decode("utf-8")

# Detect media type

suffix = Path(image_path).suffix.lower()

media_type_map = {

".jpg": "image/jpeg",

".jpeg": "image/jpeg",

".png": "image/png",

".gif": "image/gif",

".webp": "image/webp"

}

media_type = media_type_map.get(suffix, "image/jpeg")

system_prompt = """You are an expert AI art prompt engineer. Analyze the provided image and generate an optimized prompt for AI image generation.

Output this exact structure:

PROMPT:

[60-120 word comma-separated prompt covering subject, environment, lighting, colors, mood, style, quality tokens]

STYLE NOTES:

[2-3 sentences on visual style and distinctive characteristics]

NEGATIVE PROMPT:

[Comma-separated exclusion list]

MODEL RECOMMENDATION:

[Best model: Midjourney, Stable Diffusion, DALL·E 3, Flux, or Ideogram]"""

message = client.messages.create(

model="claude-haiku-4-5",

max_tokens=1024,

system=system_prompt,

messages=[

{

"role": "user",

"content": [

{

"type": "image",

"source": {

"type": "base64",

"media_type": media_type,

"data": base64_image,

},

},

{

"type": "text",

"text": f"Analyze this image and generate an AI art prompt optimized for {target_model}."

}

],

}

],

)

# Parse response

response_text = message.content[0].text

return parse_prompt_response(response_text)

def parse_prompt_response(text: str) -> dict:

"""Parse structured response into a dictionary."""

result = {}

sections = {

"prompt": "PROMPT:",

"style_notes": "STYLE NOTES:",

"negative_prompt": "NEGATIVE PROMPT:",

"model_recommendation": "MODEL RECOMMENDATION:"

}

lines = text.split("\n")

current_section = None

current_content = []

for line in lines:

line = line.strip()

matched = False

for key, header in sections.items():

if line.startswith(header):

if current_section:

result[current_section] = " ".join(current_content).strip()

current_section = key

remainder = line[len(header):].strip()

current_content = [remainder] if remainder else []

matched = True

break

if not matched and current_section and line:

current_content.append(line)

if current_section:

result[current_section] = " ".join(current_content).strip()

return result

# Example usage

if __name__ == "__main__":

result = image_to_prompt("reference_photo.jpg", target_model="Midjourney")

print("PROMPT:", result.get("prompt"))

print("NEGATIVE:", result.get("negative_prompt"))

print("RECOMMENDED MODEL:", result.get("model_recommendation"))JavaScript / Node.js实现

import Anthropic from "@anthropic-ai/sdk";

import fs from "fs";

import path from "path";

const client = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY,

});

const SYSTEM_PROMPT = `You are an expert AI art prompt engineer. Analyze the provided image and generate an optimized prompt for AI image generation.

Output this exact structure:

PROMPT:

[60-120 word comma-separated prompt covering subject, environment, lighting, colors, mood, style, quality tokens]

STYLE NOTES:

[2-3 sentences on visual style and distinctive characteristics]

NEGATIVE PROMPT:

[Comma-separated exclusion list]

MODEL RECOMMENDATION:

[Best model: Midjourney, Stable Diffusion, DALL·E 3, Flux, or Ideogram]`;

async function imageToPrompt(imagePath, targetModel = "Midjourney") {

const imageBuffer = fs.readFileSync(imagePath);

const base64Image = imageBuffer.toString("base64");

const ext = path.extname(imagePath).toLowerCase();

const mediaTypeMap = {

".jpg": "image/jpeg",

".jpeg": "image/jpeg",

".png": "image/png",

".gif": "image/gif",

".webp": "image/webp",

};

const mediaType = mediaTypeMap[ext] || "image/jpeg";

const message = await client.messages.create({

model: "claude-haiku-4-5",

max_tokens: 1024,

system: SYSTEM_PROMPT,

messages: [

{

role: "user",

content: [

{

type: "image",

source: {

type: "base64",

media_type: mediaType,

data: base64Image,

},

},

{

type: "text",

text: `Analyze this image and generate an AI art prompt optimized for ${targetModel}.`,

},

],

},

],

});

const responseText = message.content[0].text;

return parsePromptResponse(responseText);

}

function parsePromptResponse(text) {

const result = {};

const sections = {

prompt: "PROMPT:",

style_notes: "STYLE NOTES:",

negative_prompt: "NEGATIVE PROMPT:",

model_recommendation: "MODEL RECOMMENDATION:",

};

let currentSection = null;

let currentLines = [];

for (const rawLine of text.split("\n")) {

const line = rawLine.trim();

let matched = false;

for (const [key, header] of Object.entries(sections)) {

if (line.startsWith(header)) {

if (currentSection) {

result[currentSection] = currentLines.join(" ").trim();

}

currentSection = key;

const remainder = line.slice(header.length).trim();

currentLines = remainder ? [remainder] : [];

matched = true;

break;

}

}

if (!matched && currentSection && line) {

currentLines.push(line);

}

}

if (currentSection) {

result[currentSection] = currentLines.join(" ").trim();

}

return result;

}

// Example usage

const result = await imageToPrompt("./reference.png", "Stable Diffusion");

console.log("Prompt:", result.prompt);

console.log("Negative:", result.negative_prompt);使用URL而非Base64

async function imageToPromptFromUrl(imageUrl, targetModel = "Midjourney") {

const message = await client.messages.create({

model: "claude-haiku-4-5",

max_tokens: 1024,

system: SYSTEM_PROMPT,

messages: [

{

role: "user",

content: [

{

type: "image",

source: {

type: "url",

url: imageUrl,

},

},

{

type: "text",

text: `Analyze this image and generate an AI art prompt optimized for ${targetModel}.`,

},

],

},

],

});

return parsePromptResponse(message.content[0].text);

}错误处理:速率限制、图像大小限制、不支持的格式

生产环境实现必须优雅地处理这些失败场景:

async function imageToPromptSafe(imagePath, targetModel = "Midjourney") {

// Check file size before upload (5MB limit)

const stats = fs.statSync(imagePath);

const fileSizeMB = stats.size / (1024 * 1024);

if (fileSizeMB > 5) {

throw new Error(`Image too large: ${fileSizeMB.toFixed(1)}MB. Max 5MB.`);

}

// Check format

const ext = path.extname(imagePath).toLowerCase();

const supported = [".jpg", ".jpeg", ".png", ".gif", ".webp"];

if (!supported.includes(ext)) {

throw new Error(`Unsupported format: ${ext}. Use JPG, PNG, GIF, or WebP.`);

}

try {

return await imageToPrompt(imagePath, targetModel);

} catch (error) {

if (error.status === 429) {

// Rate limited — implement exponential backoff

const retryAfter = parseInt(error.headers?.["retry-after"] || "60");

console.warn(`Rate limited. Retry after ${retryAfter}s`);

await new Promise(resolve => setTimeout(resolve, retryAfter * 1000));

return await imageToPrompt(imagePath, targetModel); // single retry

}

if (error.status === 400) {

throw new Error(`Bad request — check image format and size: ${error.message}`);

}

throw error;

}

}

成本对比:Claude vs GPT-4V vs Gemini Vision

图像token在不同提供商之间的计算方式不同。以下是典型1024x1024 JPEG(~300KB)的实际对比:

| API | 图像Token成本 | 文本输出成本 | 每1000张图像成本 |

|---|---|---|---|

| Claude Haiku 4.5 | ~1,600输入token | ~300输出token | ~$0.40 |

| Claude Sonnet 3.5 | ~1,600输入token | ~300输出token | ~$3.60 |

| GPT-4o mini | ~765输入token(低详细度) | ~300输出token | ~$0.23 |

| GPT-4o | ~765输入token | ~300输出token | ~$2.70 |

| Gemini 1.5 Flash | ~258输入token | ~300输出token | ~$0.08 |

| Gemini 1.5 Pro | ~258输入token | ~300输出token | ~$0.90 |

价格为2026年3月的估算值。请在各提供商网站核实当前价格。

对于每天处理数百张图像的消费者应用,Gemini Flash或GPT-4o mini最具成本效益。对于提示词准确性至关重要的高质量管道,Claude Haiku提供了最佳的质量成本比。

缓存策略以降低API成本

对于生产部署,缓存至关重要。同一张图像绝不应被分析两次:

import crypto from "crypto";

import Redis from "ioredis";

const redis = new Redis(process.env.REDIS_URL);

const CACHE_TTL = 60 * 60 * 24 * 30; // 30 days

async function imageToPromptCached(imageBuffer, targetModel = "Midjourney") {

// Create cache key from image content hash + model

const imageHash = crypto

.createHash("sha256")

.update(imageBuffer)

.digest("hex");

const cacheKey = `img2prompt:${imageHash}:${targetModel}`;

// Check cache

const cached = await redis.get(cacheKey);

if (cached) {

return JSON.parse(cached);

}

// Generate prompt

const base64Image = imageBuffer.toString("base64");

const result = await imageToPrompt(base64Image, targetModel);

// Cache result

await redis.setex(cacheKey, CACHE_TTL, JSON.stringify(result));

return result;

}生产架构:Serverless函数模式

对于Web应用来说,最实用的生产模式是一个接受图像数据并返回提示词的serverless函数。这是ImageToPrompt本身使用的模式,部署在Vercel上:

// api/analyze.ts (Vercel serverless function)

import type { VercelRequest, VercelResponse } from "@vercel/node";

import Anthropic from "@anthropic-ai/sdk";

const client = new Anthropic({ apiKey: process.env.ANTHROPIC_API_KEY });

export default async function handler(req: VercelRequest, res: VercelResponse) {

if (req.method !== "POST") {

return res.status(405).json({ error: "Method not allowed" });

}

// CORS

res.setHeader("Access-Control-Allow-Origin", process.env.ALLOWED_ORIGIN || "*");

const { image, mediaType, targetModel } = req.body;

if (!image || !mediaType) {

return res.status(400).json({ error: "image and mediaType are required" });

}

try {

const message = await client.messages.create({

model: "claude-haiku-4-5",

max_tokens: 1024,

system: SYSTEM_PROMPT,

messages: [

{

role: "user",

content: [

{

type: "image",

source: { type: "base64", media_type: mediaType, data: image },

},

{

type: "text",

text: `Generate an AI art prompt optimized for ${targetModel || "Midjourney"}.`,

},

],

},

],

});

const parsed = parsePromptResponse(message.content[0].text);

return res.status(200).json(parsed);

} catch (error: unknown) {

const err = error as { status?: number; message?: string };

if (err.status === 429) {

return res.status(429).json({ error: "Rate limit exceeded" });

}

return res.status(500).json({ error: "Analysis failed" });

}

}实际使用案例

批量处理图像目录

import { glob } from "glob";

import { writeFileSync } from "fs";

async function batchProcess(directory, targetModel = "Midjourney") {

const images = await glob(`${directory}/**/*.{jpg,jpeg,png,webp}`);

const results = [];

for (const imagePath of images) {

try {

console.log(`Processing: ${imagePath}`);

const result = await imageToPromptSafe(imagePath, targetModel);

results.push({ file: imagePath, ...result });

// Respectful rate limiting: 1 request per second

await new Promise(resolve => setTimeout(resolve, 1000));

} catch (error) {

console.error(`Failed: ${imagePath}`, error.message);

results.push({ file: imagePath, error: error.message });

}

}

writeFileSync("prompts.json", JSON.stringify(results, null, 2));

console.log(`Processed ${results.length} images`);

}

batchProcess("./assets/reference-images", "Stable Diffusion");浏览器扩展模式

对于分析用户正在查看的图像的浏览器扩展,你需要从页面获取图像,在内容脚本中将其转换为Base64,发送到你的后端serverless函数(以保持API密钥在服务器端),然后在弹窗中显示返回的提示词。

关键注意事项:永远不要在客户端JavaScript中暴露你的Anthropic API密钥。始终通过后端函数代理,在转发到Anthropic API之前验证请求。

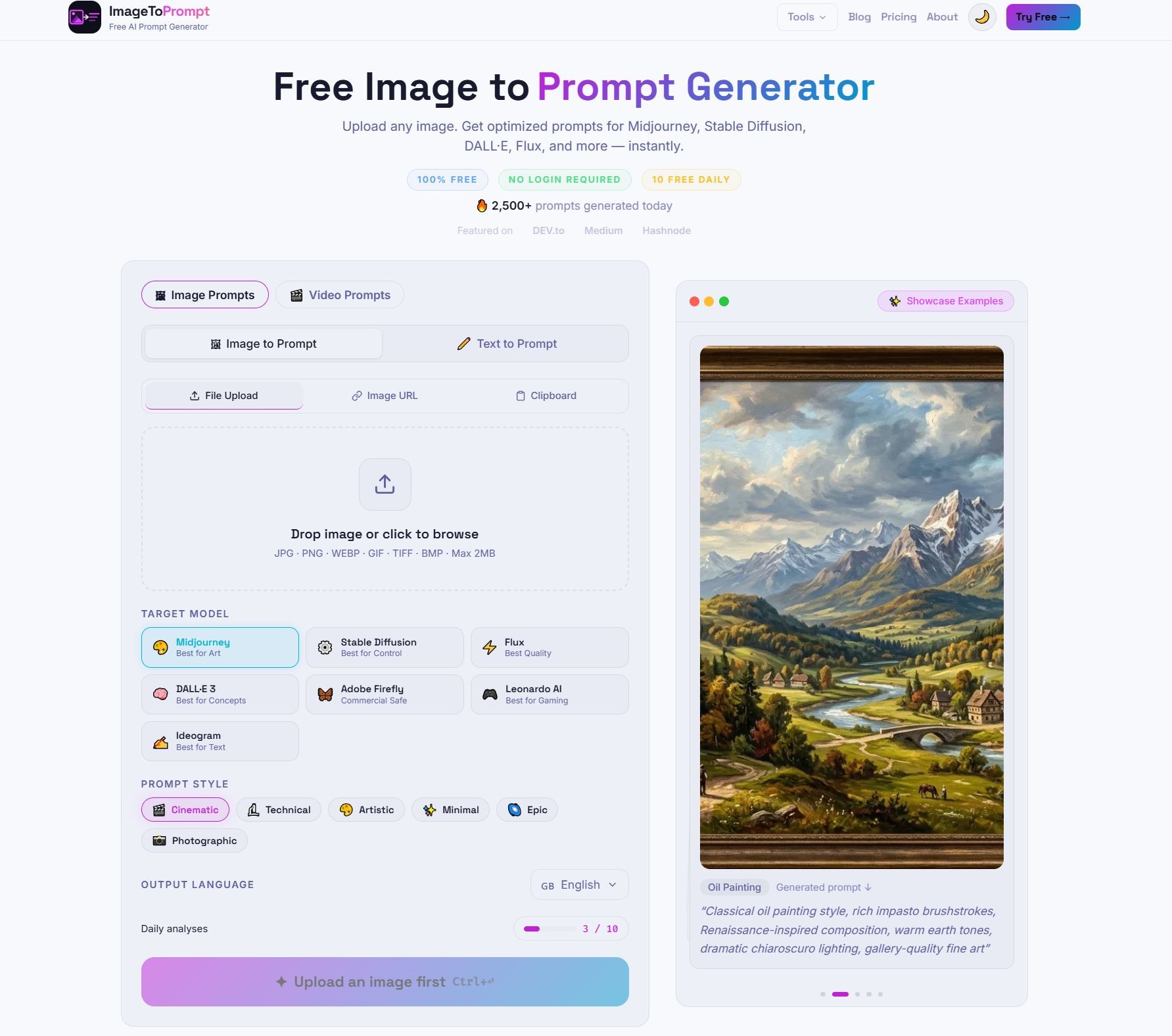

ImageToPrompt作为参考实现

ImageToPrompt(本站的工具)是这种模式的工作参考实现。它使用Claude Haiku进行图像分析,Vercel serverless函数作为后端,Upstash Redis进行速率限制(免费层每个IP每天10次请求),以及React前端处理图像上传、预览和提示词显示。

完整的架构——serverless函数、速率限制、CORS处理、错误响应——代表了一个生产就绪的模式,你可以根据自己的使用场景进行调整。如果你正在构建类似的东西,研究一个工作中的实现如何处理边缘情况(大文件、不支持的格式、并发请求)比任何教程都更有价值。

常见问题

图片转提示词最好的API是哪个?

对于图片转提示词,Claude Vision通常生成最结构化、最具艺术指导意识的输出。GPT-4o在OpenAI生态系统中最通用。Gemini 1.5 Flash是高吞吐量管道中最经济的选择。

图片转提示词每张图像的API调用成本是多少?

成本因提供商而异。Claude Haiku大约每1000张图像$0.40,GPT-4o mini约$0.23,Gemini 1.5 Flash最便宜,约$0.08。这些是典型1024x1024 JPEG的估算值。

应该使用Base64还是URL发送图像给API?

Base64推荐用于生产环境,因为延迟更可预测。URL更适合原型开发和快速测试。每张图像最大限制5MB。

如何降低图片转提示词API的成本?

使用基于图像内容哈希的缓存策略,避免重复分析同一张图像。对于高吞吐量场景,可以选择Gemini Flash或GPT-4o mini。对于质量优先的场景,Claude Haiku提供了最佳的性价比。